Change issues have 3 levels you need to look at

Remember, you can’t change what you can't see.

Hi there, I’m Robert. I write about behavior change in organizations.

For more: aimforbehavior | Training | SHIFT Method

There’s a conversation that happens in almost every change project, which is why I wanted to write this piece, because it happened to me in the following way:

Our director asked us… how’s adoption going for the new tool?

Our manager immediately said something like…really well, we did the training, completion is high and the comms are all out!

However, we all know that this does not really mean adoption is high.. in fact six weeks after that meeting, I had to go down to finance to request a budget line for a project and I noticed they were still using spreadsheets, and not the really expensive tool the org had deployed earlier.

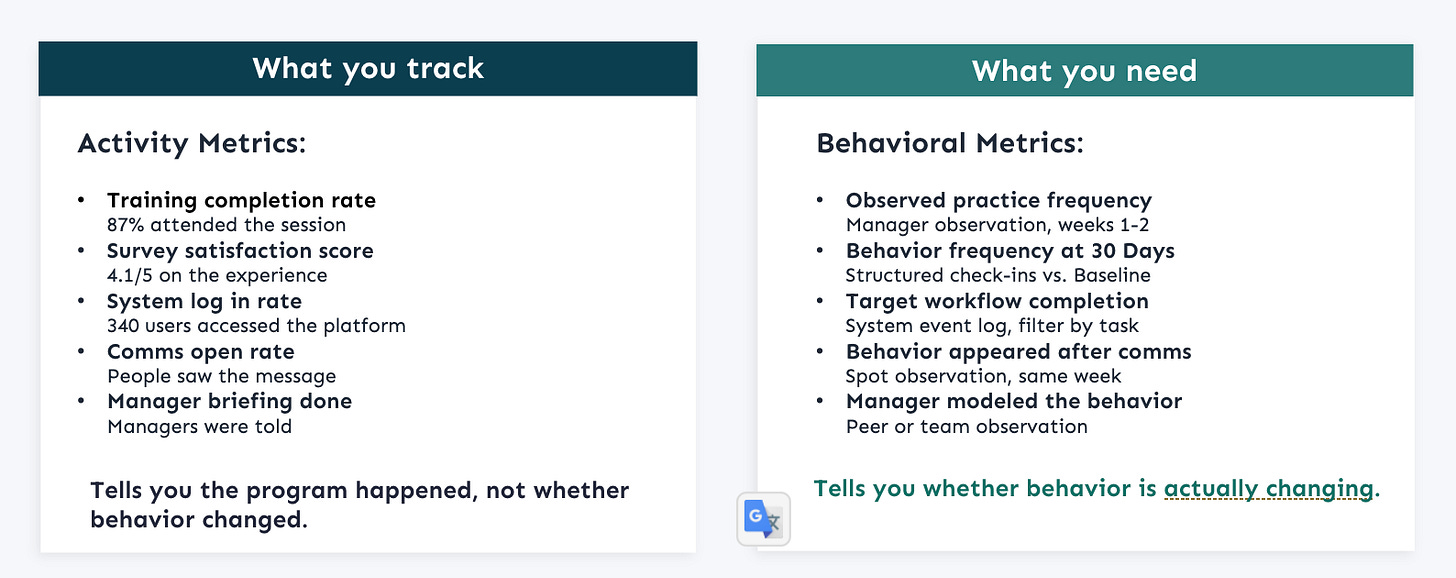

Now, there can be many issues here, but I want to focus this piece on measurement, because for that specific project we ended up realizing we were tracking the wrong things.. see, we thought the metrics looked good, but no one was focusing on the actual behavior, which was not really changing.

Has that happened to you before in a project?

What the numbers were actually telling us

Training completion was telling us that people were showing up, fair enough, they needed to be trained, and when we sent out the surveys, they all scored it high, they were satisfied… so why still the excel… I was really confused.

We fell into the trap most change teams fall under…those metrics are easy to collect, easy to present, and they look like actual progress and change is happening, however, they were a measure of perception, but not of behavior.

Here’s what that looks like in practice.

A training completion rate of 87% tells you 87% attended the session. What 87% of those people will actually do differently after the training… that’s a separate question entirely.

A 4.1 satisfaction score tells you how people felt about the process, but not whether the process changed anything.

340 system logins in the first month tells you people accessed the platform, not whether any of them completed the workflow it was designed for.

Again, these metrics aren’t wrong…but they are not going to tell you if the behaviors are changing.

The story keeps repeating itself…

Let me tell you about a project I was brought into help… now as a consultant and not as an employee.

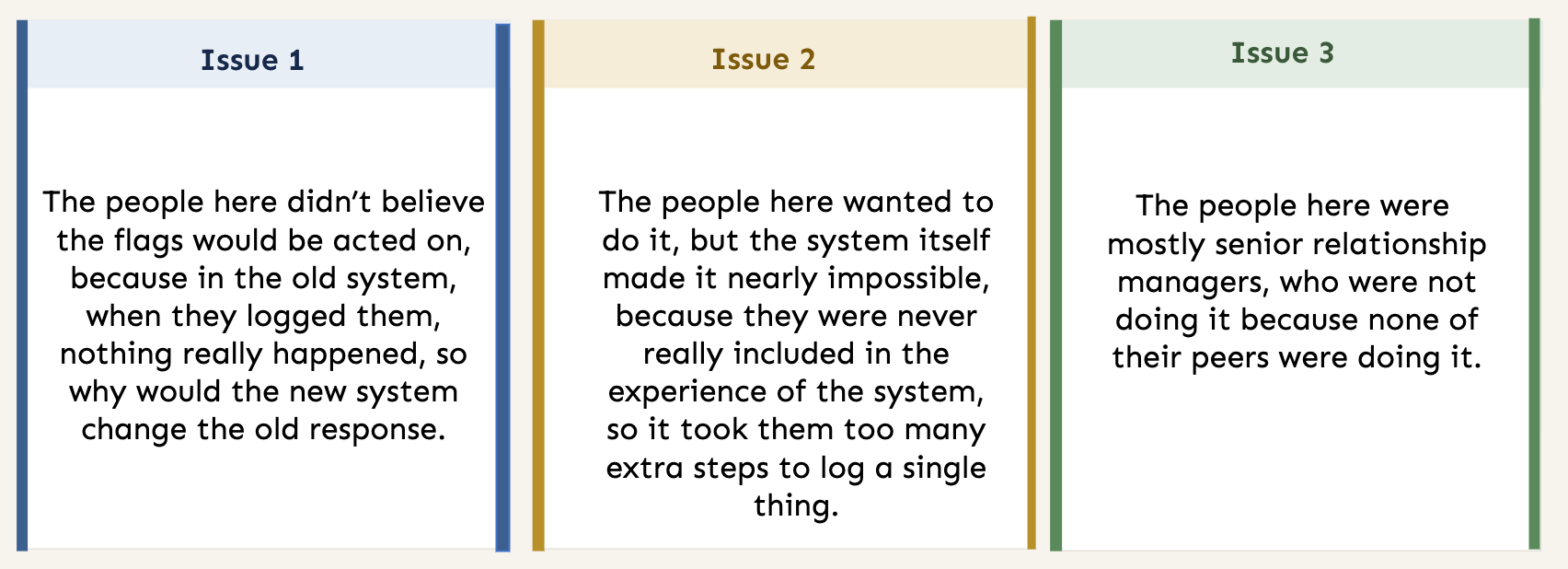

An FMCG had rolled out a new risk-flagging system, they wanted the relationship managers to log client risk flags within 24 hours of identifying them.. nothing too hard.

Adoption a few moths later was low and the program team was disappointed, as they had done everything right.. comms, training, manager briefings launch event and so on.. so why low engagement.

When I sat with the relationship managers, three completely different problems emerged depending on who I was talking to.. (hence why it important to understand the actors)

Issue 1: The people here didn’t believe the flags would be acted on, because in the old system, when they logged them nothing really happened, so why would the new system change the old response.

Issue 2: The people here wanted to do it, but the system itself made it nearly impossible, because they were never really included in the experience of the system, so it took them too many extra steps to log a single thing.

Issue 3: The people here were mostly senior relationship managers, who were not doing it because none of their peers were doing it.

One behavior, but very different barriers that needed to be addressed!

Where did barriers actually sit

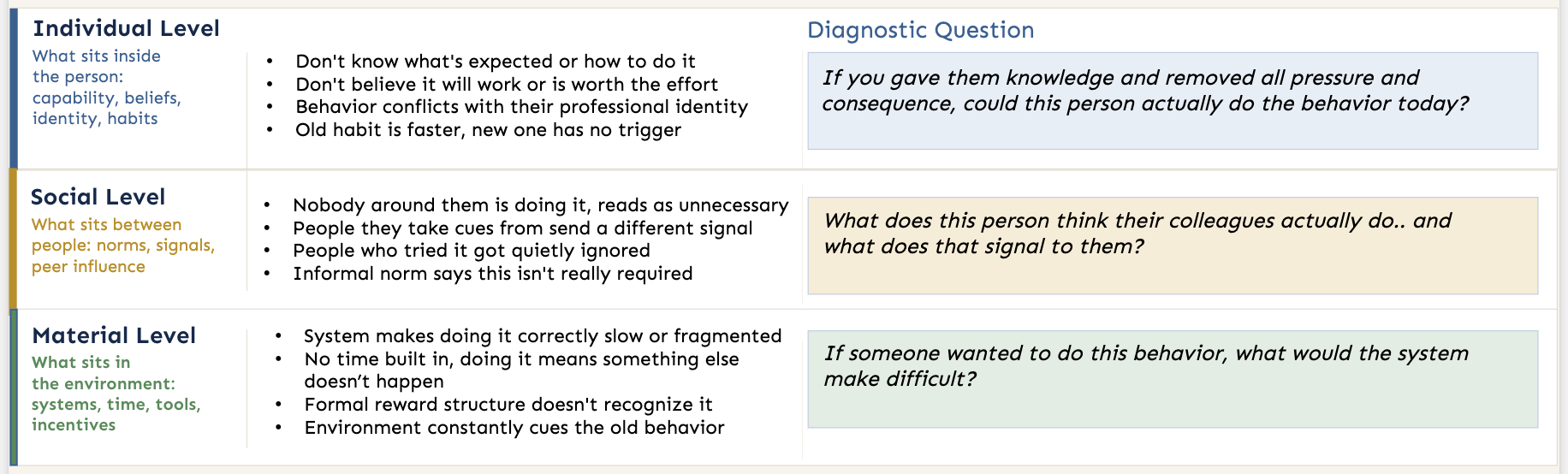

When you have a change issue in an organization, there are usually three levels where you need to look… either its within the individual, between people, or in the system around them.

Individual Level is the one where most change programs focus on.. but as I have seen, the diagnostic layer here should not be only about comms and training. You should also look for signals like not believing the change will work, how they see themselves in the role and so on. The diagnostic questions here are simple: if you gave them knowledge and removed all pressure and consequence, could this person actually do the behavior today?

Social Level is what sits between people, so if nobody around them is doing it, why should they bother. Equally maybe the informal norm says this kind of thing isn’t really required. The diagnostic question here: what does this person think their colleagues actually do.. and what does that signal to them?

Material Level is about the environment, the system that makes doing the right things hard, but also the policies, the structures and so on. The diagnostic question: if someone wanted to do this behavior, what would the system make difficult?

In my experience, you should always start with and give more weight to the system issues.

The measurement gap this creates

If your metrics don’t tell you which of those three levels the barrier is at, you’re pretty much flying blind, you can’t see which type of issue it is, and so you can’t know what kind of solution you need to use.

And if you can’t see what’s driving it, you default to what you know.. because it is safe… more training, better comms, another stakeholder engagement round, but these are only individual-level interventions, they assume the problem is awareness, knowledge, or motivation.

However, if the issue is social or material, those interventions don’t touch it, so while they make the activity metrics look good, with the sessions attended, and the champions recruited… the old behavior stays the same.

What closes the gap is measuring the behavior directly, and making sure you are addressing the right issue at the right level.

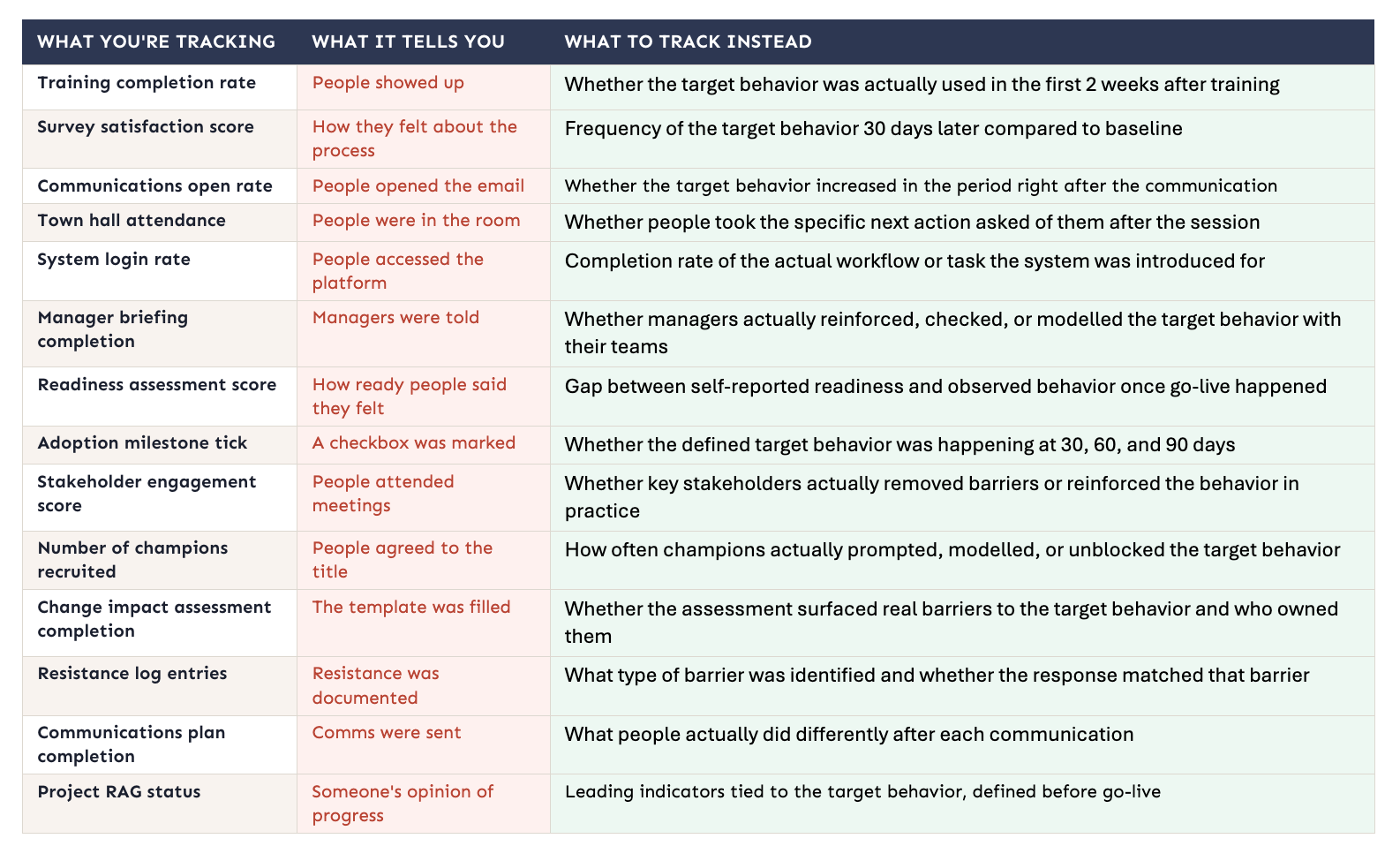

14 metrics to track instead <save this>

Here is a nice table for you, to help you track better and not fall in the trap of perception over behavior. Please feel free to adapt the What To Track Instead to your own project.

What comes next

The table above helps with one part of the problem… tracking the behavior directly, once you know what to look for.

But most teams get stuck one step earlier than that, they can see adoption is weak yet they still can’t tell why, and the truth is that without a diagnosis, the default response is always the same.. more comms, more training, another push.. regardless of whether that matches the actual barrier.

That is really the bigger issue for me, and why I write pieces like these and share with you, because once you are measuring the wrong thing, you usually end up solving the wrong thing too.

As a change person, you have to get much clearer on what behavior you are trying to change, what is actually blocking it, and whether the metrics you are using can see that at all.

The question worth asking this week

Pick one behavior your current change program is trying to drive., it has to be a specific, observable action, not an outcome or a mindset.

Now ask: what metric are you using to track whether that behavior is happening?

If the answer is a training number or a comms stat.. you don’t have a behavioral metric, you have an activity metric dressed up as one.

It is also why I have been building a toolkit with practical tools for change, because this is where a lot of the work goes off track, if you are curious, join the wait list here

I write about organizational behavior change and advise companies on how to implement it.

if you’re working on a change program where the behavior isn’t changing, I’d be curious to hear more… reply to this email or find me on LinkedIn.

Very best, Robert